Last week was a week of turmoil. Google and OpenAI faced competition in open-source models, governments discussed the threat of AI, and some text workers who may have already been affected by AI went on strike.

Now let's review the big AI news from last week.

May 2nd

Hollywood writers strike over pay and AI - Dailynewsegypt

The Writers Guild of America (WGA) voted to strike in protest on May 2nd. The main reasons were low wages for writers and opposition to Hollywood using AI to replace them.

https://en.wikipedia.org/wiki/2023_Writers_Guild_of_America_strike

May 3rd

It is reported that Microsoft is preparing to offer a chat GPT running on proprietary devices for users concerned about privacy leaks, but it will cost extra.

May 4th

An internal Google document was shared on a public Discord server, in which an internal researcher pointed out that both Google and OpenAI do not have a "moat," which is a sustainable competitive advantage. Instead, the researcher believes that open-source models are the ultimate winners in the AI enterprise war and praised the technology of LoRA, which can achieve model fine-tuning at a very low cost and time.

The article also provides a detailed list of the progress of open-source models since the beginning of the year, which is quite valuable.

On the same day, Bing chat announced support for plugins and will introduce chat persistence, chat history, and various ways of answering (such as visualized charts for answers), and it is completely open for use without a waitlist.

Image

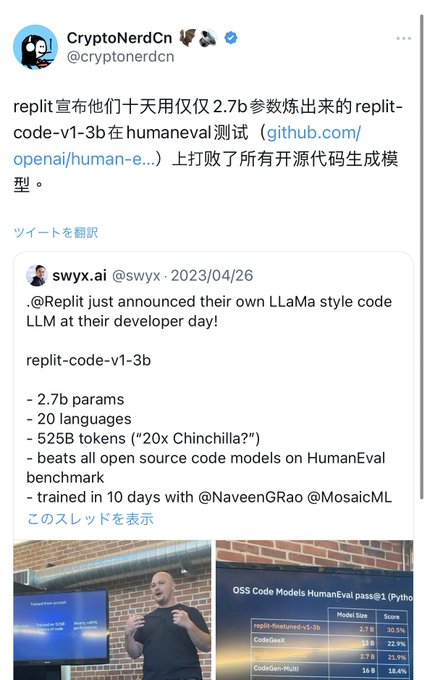

On the same day, replitreplit-code-v1-3b, which claimed to have the best performance in Code LLM, was officially released.

May 5th

US President Joe Biden met with CEOs of top companies in the field of artificial intelligence at the White House, including the CEOs of Microsoft and Google's parent company Alphabet, emphasizing the potential of AI and the dangers it may pose to people worldwide. Google CEO Sundar Pichai, Microsoft CEO Satya Nadella, and OpenAI CEO Sam Altman attended the high-level meeting. https://www.whitehouse.gov/briefing-room/statements-releases/2023/05/04/readout-of-white-house-meeting-with-ceos-on-advancing-responsible-artificial-intelligence-innovation/

On the same day, HuggingFace released its own Code LLM: StarCoder, claiming to be the best open-source Code LLM on the market.

Image

StarCoder and StarCoderBase are large-scale code language models (Code LLMs) trained on GitHub's licensing data, including more than 80 programming languages, Git commits, GitHub issues, and Jupyter notebooks. StarCoderBase, similar to LLaMA, trained a ~15B parameter model with 1 trillion tokens. StarCoder is a fine-tuned model based on StarCoderBase, trained on 35 billion Python tokens. It is claimed that StarCoderBase outperforms existing open-source code LLMs on popular programming benchmarks and matches or exceeds closed models such as OpenAI's code-cushman-001 (which supported early versions of GitHub Copilot).

Related links:

https://huggingface.co/bigcode

May 7th

Is being a prompt engineer a good job? It may be now, but it won't be in the future, at least according to Staff LoganK, a developer at OpenAI. He even said that even if someone pays you to do it, you shouldn't consider it as a career because it lacks the foundation of "long-term success." In short, many people are focusing on artificial intelligence, thinking about how it will disrupt the job market and trying to position themselves for the future. This is 100% the right thing to do. The narrative of most media revolves around the fact that prompt engineering will be the best job in the future. The problem is that more and more prompt engineering will be done by AI systems themselves. There are already plenty of good examples of this in products. And it will only get better. One day, ChatGPT will synthesize your previous conversations and provide automatic prompt engineering for your queries based on the context. This doesn't mean that people who deeply understand how to use these systems won't have value, but I imagine it will be a skill learned as part of people's standard education rather than a special talent possessed by a few (like today).

If this article is helpful, please subscribe and share, and you can also follow my Twitter. I will bring you more information about Web3, Layer2, AI, and Japan-related news: